For over a decade, the ability to "sideload" applications—installing software from third-party sources rather than the official Google Play Store—has been a defining characteristic of the Android operating system. This openness has long served as the primary differentiator between Google’s mobile ecosystem and the "walled garden" approach favored by Apple’s iOS. However, as the global landscape of mobile security shifts toward combating increasingly sophisticated social engineering and financial fraud, Google is preparing to implement the most significant changes to the sideloading process in the history of the platform. While the core functionality of sideloading is not being removed, the introduction of new "friction points" represents a fundamental pivot in how Android balances user freedom with digital safety.

The upcoming changes are designed to address a specific and growing category of cybercrime: "vishing" (voice phishing) and remote-access scams. In these scenarios, bad actors often coerce unsuspecting users into downloading malicious Android Package Kits (APKs) that grant the attacker control over the device or access to sensitive banking information. To mitigate these risks, Google is introducing a multi-layered verification and "cooling-off" system that will fundamentally alter the user experience for those who venture outside the Play Store. Understanding these seven key pillars of the new sideloading rules is essential for every Android user, from casual owners to power users and developers.

First, it is important to note that the entry point for sideloading remains unchanged. To initiate the process, users must still enable "Developer Mode" on their devices. This remains a deliberate, multi-step ritual: navigating to the system settings, finding the "About Phone" section, and tapping the "Build Number" repeatedly until a notification confirms that developer privileges have been granted. This "secret handshake" has historically served as the first line of defense, ensuring that only users with a certain level of technical intent can bypass standard security protocols. By keeping this mechanism intact, Google preserves a sense of continuity for long-time enthusiasts, even as the subsequent steps become significantly more rigorous.

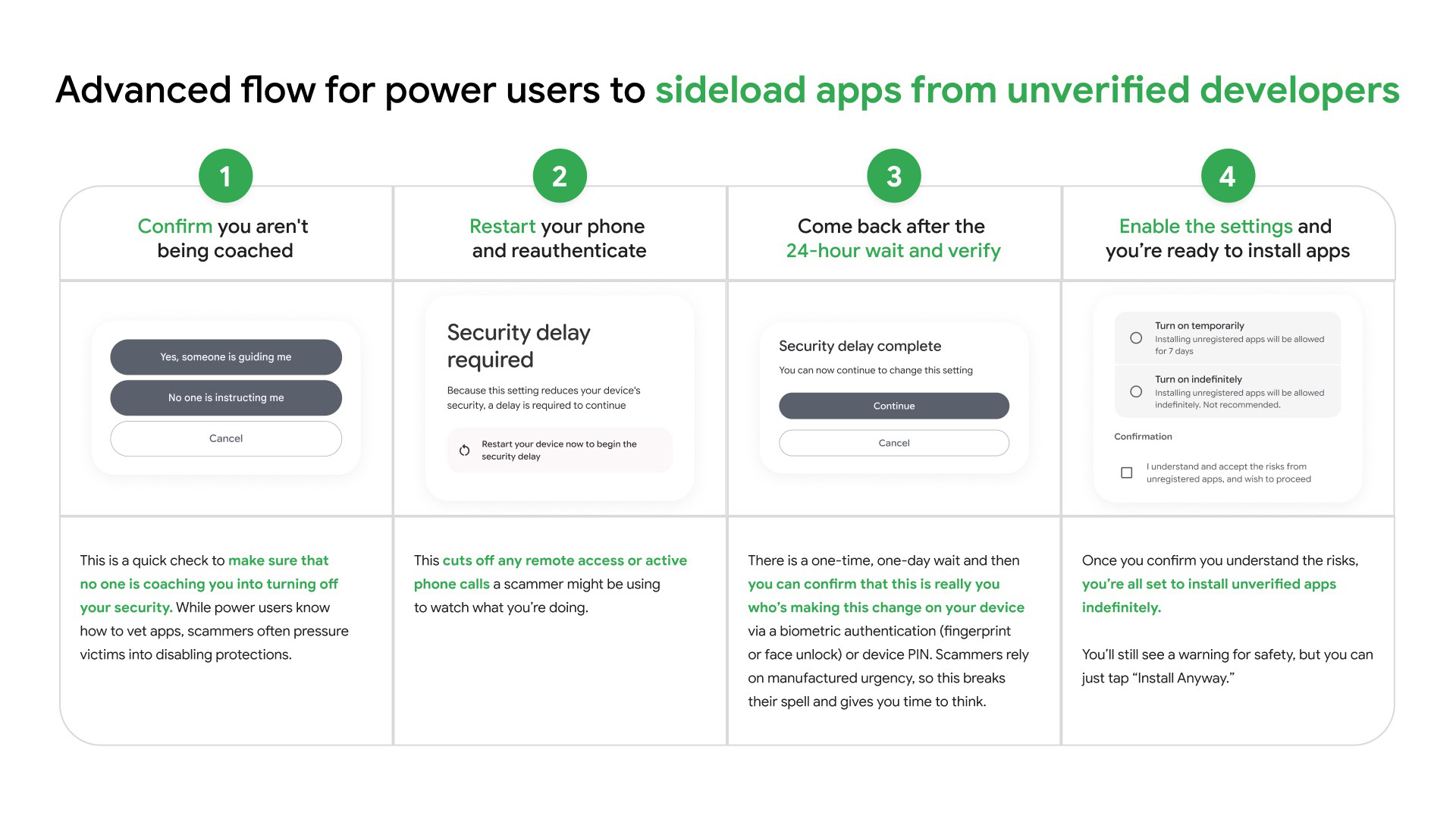

Once Developer Mode is active, the new protocol introduces immediate psychological and technical barriers. The second major change involves a new series of "anti-coercion" prompts. When a user attempts to install an unverified app, the system will trigger a full-screen warning asking the user to confirm that they are acting of their own volition and are not being pressured by a third party. This is a direct response to the "tech support" scam model, where a fraudster remains on the phone with a victim, guiding them through the installation of malware. By forcing a moment of reflection, Google hopes to break the spell of high-pressure social engineering.

Following this confirmation, the system introduces a mandatory hardware reset. Users will be required to restart their devices before the sideloading permissions can be finalized. This is perhaps the most clever technical hurdle in the new framework. A device restart effectively terminates any active phone calls, screen-sharing sessions, or remote-access tools that a scammer might be using to monitor the victim’s screen. It creates a physical and digital disconnect, providing a "safety gap" that allows the user to regain control of their device and potentially realize they are in the midst of a fraudulent interaction.

The fourth and perhaps most controversial pillar of the new rules is the implementation of a 24-hour "cooling-off" period. Under the new guidelines, once the device has been restarted and the initial intent to sideload has been logged, the user must wait exactly 24 hours before they can actually install any unverified applications. This delay is unprecedented in the world of mobile operating systems. While it will undoubtedly frustrate power users who wish to test new software immediately, the logic from a security standpoint is clear: scammers rely on urgency. By enforcing a day-long delay, Google effectively kills the momentum of a scam. A victim who is being pressured into a "limited-time" fix for a non-existent virus will have 24 hours for the adrenaline to fade and for friends or family members to intervene.

To manage the frustration of this delay, Google has introduced a persistent authentication system. Once the 24-hour waiting period has elapsed, the user must authenticate their identity using biometrics—either a fingerprint scan or face unlock. At this stage, the user is presented with two options: they can authorize sideloading for a temporary seven-day window, or they can choose to enable it indefinitely. If a user selects the indefinite option, the device "remembers" this preference, and the user will not be subjected to the restart or the 24-hour wait for future installations. This ensures that while the initial setup is arduous, the long-term experience for legitimate power users remains relatively fluid.

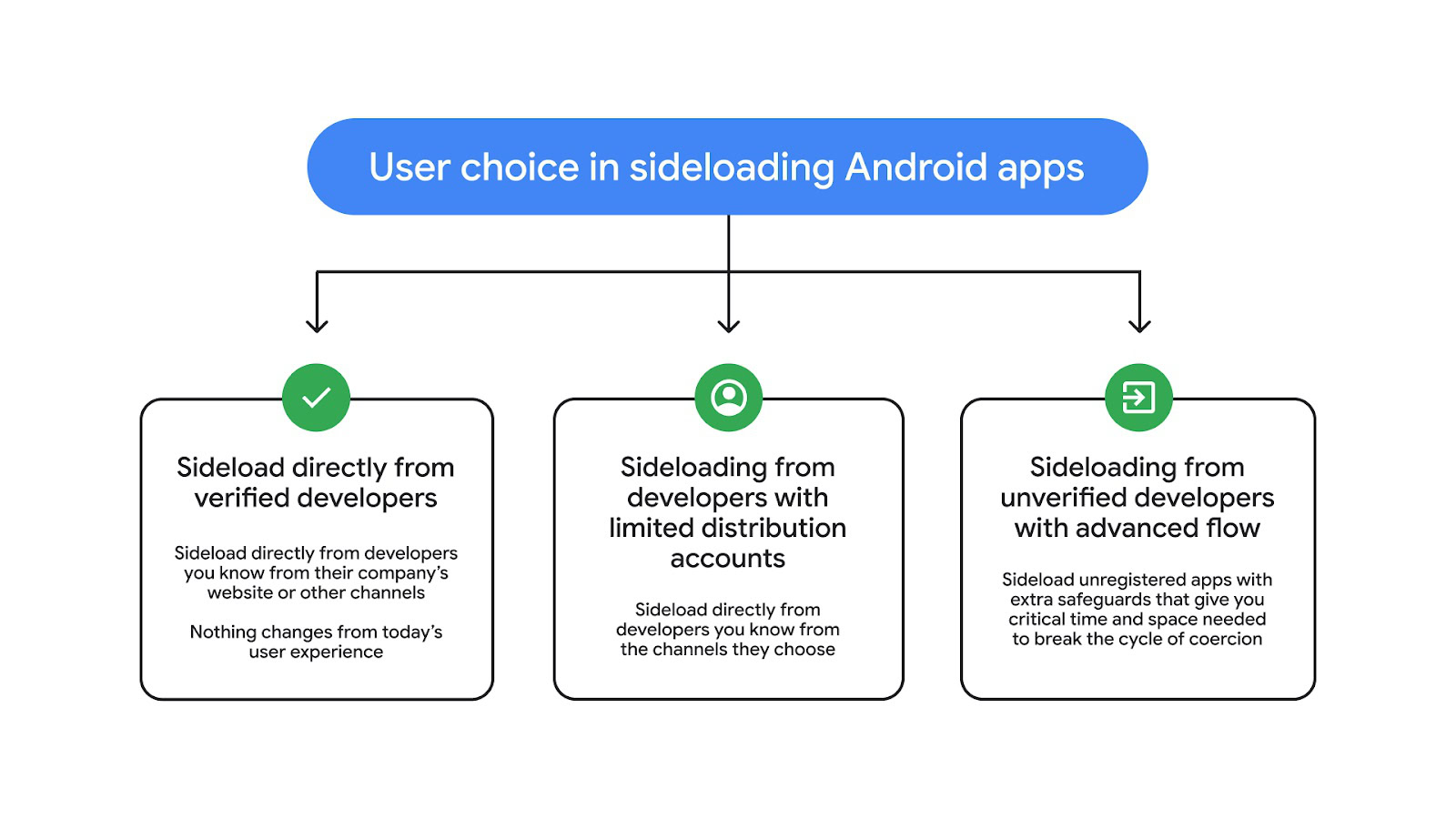

The sixth component of this overhaul is the introduction of a new "Verified Developer" tier for third-party applications. Google recognizes that not all apps outside the Play Store are dangerous; many are legitimate tools distributed by independent creators or alternative app stores like F-Droid. To accommodate these developers, Google is launching a verification program. For a one-time fee of $25, developers can verify their identity with Google. Once an app is "verified," it bypasses the aggressive new restrictions, allowing users to sideload it with the same ease they enjoy today. This creates a "middle ground" in the Android ecosystem—apps that are not hosted on the Play Store but have still undergone a basic identity check to ensure the developer is accountable.

However, Google is also carving out an exception for the "tinkerer" community through limited distribution accounts. Applications that are distributed to a very small number of people—specifically 20 users or fewer—will not be subject to the new sideloading hurdles. This "hobbyist rule" is essential for students learning to code, developers testing early builds with a small team, or families using custom-built private apps. By exempting these low-volume distributions, Google maintains the "open lab" spirit of Android without leaving a massive loophole for large-scale malware campaigns.

The rollout of these rules is scheduled to begin in August, though the initial implementation will be targeted. Google has indicated that the verification rules will first be strictly enforced in specific markets, including Brazil, Indonesia, Singapore, and Thailand—regions that have historically seen high rates of mobile-based financial fraud. Following this regional pilot, a global expansion is expected to continue through 2027.

One remaining mystery is the technical delivery mechanism and version compatibility of these changes. Google has not yet clarified whether these rules will be exclusive to the upcoming Android 16 or 17 releases, or if they will be backported to older versions of the OS. Given Google’s recent shift toward modularizing Android through "Project Mainline" and Google Play Services updates, it is highly likely that these security flows could be pushed to devices running Android 14 or 15 via a background system update. This would allow Google to protect a much larger percentage of the global user base without waiting for manufacturers to issue full firmware updates.

In conclusion, while the "24-hour rule" and mandatory restarts represent a significant departure from the instant-gratification nature of modern technology, they reflect a necessary evolution in mobile security. Android is no longer a niche platform for enthusiasts; it is the primary computing device for billions of people, many of whom are vulnerable to predatory scams. By introducing these friction points, Google is attempting to preserve the soul of Android’s openness while building a fortress around its most vulnerable users. For the seasoned Android veteran, the transition may be jarring, but the ability to opt-in "indefinitely" suggests that the platform’s core identity as a flexible, user-controlled environment will ultimately survive this security-focused transformation.